Associate Professor Lynn Cazabon, Department of Visual Arts, PI

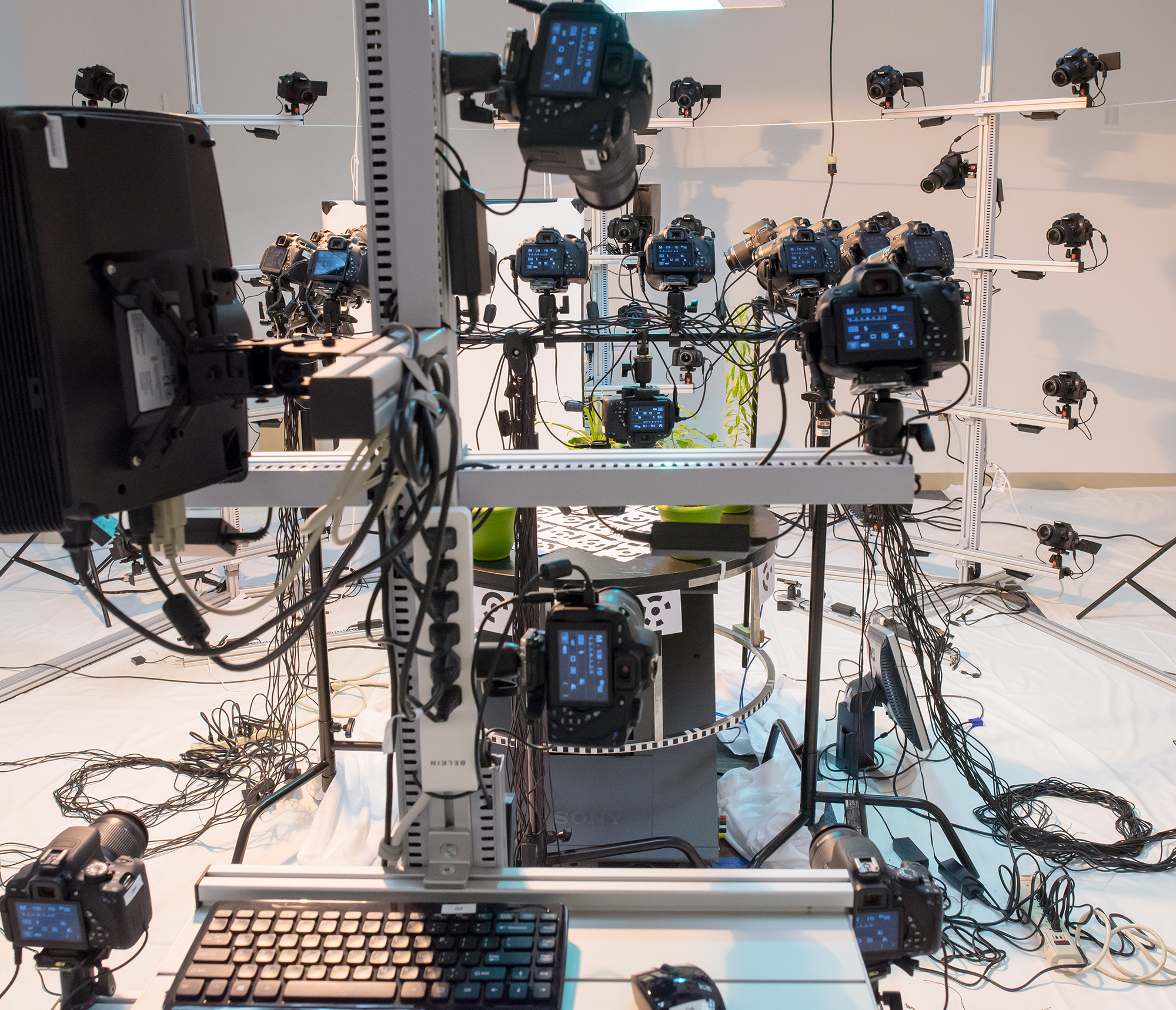

Professor Cazabon reserved the scanning rig for multiple months in order to do a 3D time-lapse sequence of plants growing. An automated turntable was been added to the rig and programmed into the control software so that five plants can be recorded over the 60-90 day cycle. The rig can scan autonomously 24/7. One of the largest hurdles was understanding how to work with the more than 50 terabytes of data that is being created.

Professor Cazabon states:

“Although plants do not “see” in the same way that humans or other animals do, the notion that plants perceive and respond to environmental input has been around since Darwin’s plant experiments in the late 19th century. More recently, research into the existence of “plant neurobiology” has been published, leading to new and rich interdisciplinary inquiries into the evolutionary purposes of the many ways that plants sense their surroundings, including through analogues to sight, hearing, touch, taste and smell as well as through perception of electrical, magnetic, and chemical input.

There is also evidence that plants communicate with neighboring plants, manipulate insects and animals, and express behavior pointing towards abilities to learn and remember experiences. The concept that plants are active agents responding and reacting to their environment and altering their own behavior as a result, versus passive vessels, represents a profound shift in thinking about the gulf between plant and animal kingdoms.

The goal of Plantelligence is to shift viewers’ preconceived ideas about plants as static objects towards a more truthful understanding of the crucial role they play in the environment. I believe that the concepts behind plant intelligence can be compelling to a wide, non-scientific audience because of the central role that plants play in human life and dissemination of these ideas will encourage more empathetic views of nature in general.”

Custom Configuring the Scanning Rig for Specialized Research

August 3, 2016

Professor Lynn Cazabon’s project, Plantelligence, is requiring extensive customization of the scanning rig. More cameras have been added to better scan the intricate detail of plants. Also, the plants need to be scanned in time-lapse, recording their growth over 2-3 months.

However, not only is Professor Cazabon’s project innovative, but the configuring of the rig to automatically handle time-lapse, extra cameras, control of grow lights, robotic registering turntable and multiple plants is a research project in itself. Mark Murnane is an Computer Science and Electrical Engineering undergraduate who is doing the innovative hardware and software configuration for this effort. Mark is in his senior year at UMBC and spent last year interning with Direct Dimensions on building this rig. This year he is overseeing its use, upgrades, and specialization requirements.